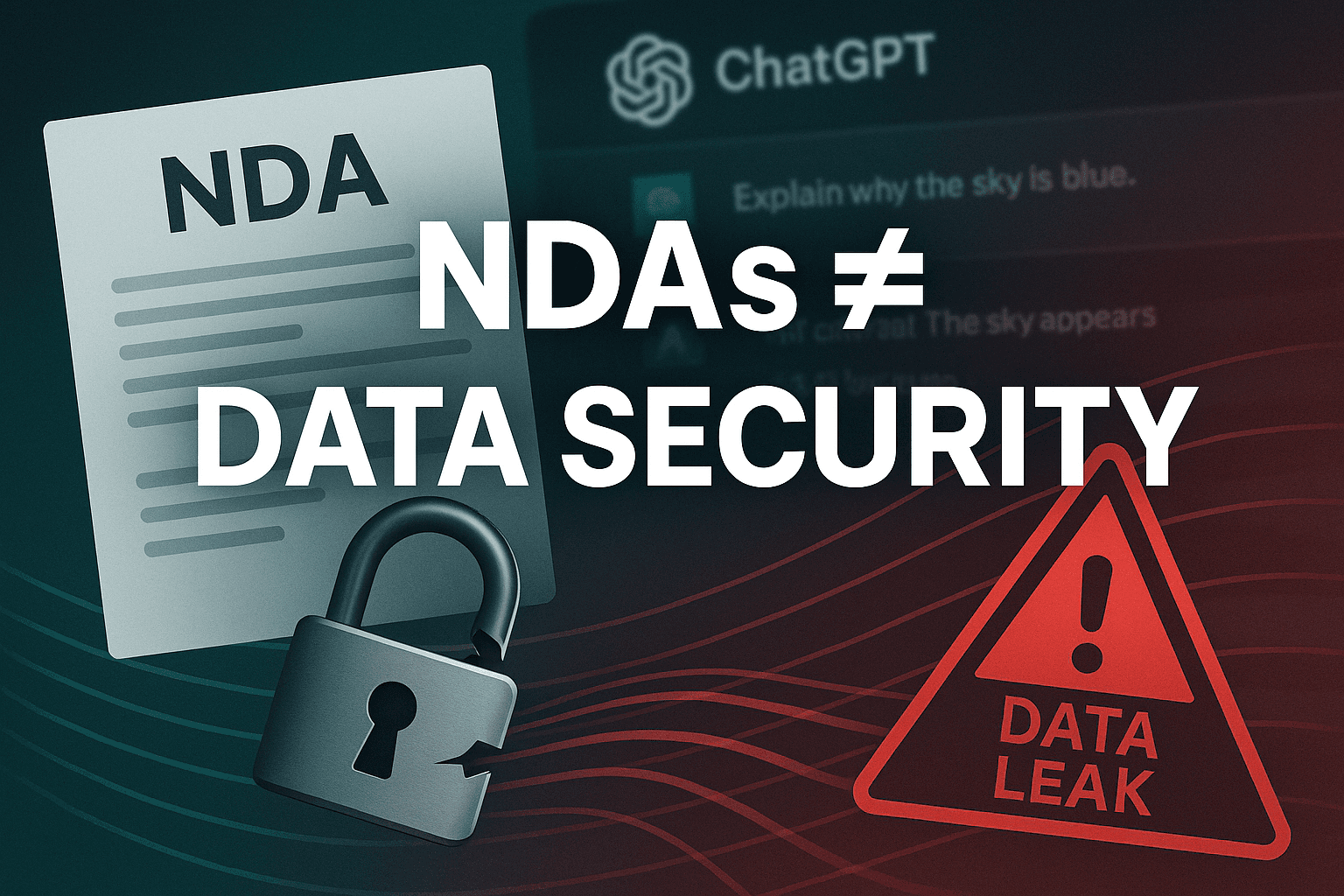

Can’t We Just Use ChatGPT with an NDA?

The Risky Illusion of Legal Safety When It Comes to AI

Multidisciplinary security engineer with deep experience across Blue Team operations, DevSecOps automation, and full-stack development. Passionate about building secure systems, scaling security through automation, and leading teams to solve real-world problems. While I specialize in defensive security, I occasionally venture into red teaming to understand both sides of the game. Keen explorer of AI/ML in security, and always up for a good scripting challenge.

💻 Tech Stack

Languages: Python, JavaScript/TypeScript, Bash, Go

Frontend: React, Next.js

Backend: Node.js, Express, Flask

Cloud: AWS, GCP, Azure Security

Security: SIEM, EDRs, Threat Hunting, Incident Response, Burp Suite

DevSecOps: Terraform, GitHub Actions, Docker, Snyk, Trivy

AI/ML: Scikit-learn, TensorFlow, LLMs for security use cases

Automation: CI/CD pipelines, Infra-as-Code, Detection-as-Code

TL;DR (Too Long; Didn’t Read)

An NDA with ChatGPT—or any cloud AI—only protects you legally, not technically. Your data can still be stored, logged, leaked, or misused, and you’ll carry the risk. If you can’t afford a leak, don’t upload it. For real security, use a self-hosted/private AI model or talk to a security expert before trusting sensitive data to the cloud.

Let’s Get Real for a Minute

You’re busy. You want AI to help with business—drafting strategies, summarizing contracts, brainstorming ideas.

Then it hits you:

“Is it safe to give this AI my sensitive info?

Well, I’ll just sign an NDA—problem solved, right?”

If only. That’s like locking your office door but leaving your confidential files on the reception desk.

1️⃣ NDAs Stop Lawyers, Not Hackers

A Non-Disclosure Agreement says, “If you leak my secrets, I’ll take you to court.”

But NDAs do nothing to stop:

Hackers

Insider threats

Bugs or misconfigurations

Accidental data exposure

They only help after the breach—and by then, your secrets are already public.

2️⃣ Where Does Your Data Really Go?

Closing your browser ≠ deleting your data.

Most AI providers:

Store prompts, chats, and uploads for months (sometimes indefinitely)

May use them for training (“to improve the model”)

Operate in jurisdictions where you have zero visibility or control

You can’t inspect their servers. You can’t prove your data is gone.

3️⃣ Compliance Doesn’t Care About Your NDA

If you handle regulated data—customer PII, financials, health info—sending it to cloud AI is a compliance minefield.

GDPR / DPDP Act / HIPAA penalties apply to you, not just the AI vendor

Jurisdiction matters—your NDA won’t override U.S., EU, or Indian data laws

4️⃣ “We’ll Sue If It Leaks!”

Let’s be real:

Cross-border lawsuits are slow and expensive

Winning in court rarely repairs your brand damage

Most businesses never fully recover from a major leak

A Quick Scenario

Your team uploads your confidential product roadmap to ChatGPT for content ideas.

Later, the provider suffers a breach.

Your NDA? Just paper.

Your roadmap? Everywhere.

The damage? Permanent.

The Smarter Play

Sensitive data? Keep it off cloud AI. Use self-hosted, air-gapped models.

Generic tasks? Cloud tools are fine—just strip out identifying details.

Compliance or security-critical work? Use technical controls, not just contracts.

Bottom Line

NDAs are legal safety nets—not shields.

In the AI age, trust is good, control is better.

If you can’t afford the consequences of a leak, don’t upload it—ever.

Mitigation (TL;DR)

To work safely with AI:

Sanitize before sharing — remove names, IDs, or any sensitive details.

Choose secure AI setups — self-hosted models or enterprise AI with zero-retention.

Enforce controls — encrypt data, limit access, and audit AI use regularly.

If you can’t afford a leak, don’t upload.

NDAs are there for legal backup—not actual security.

Would Love to Talk Data Security

I’m always up for a good conversation—especially about AI, privacy, and security.

If you’ve got a challenge, a question, or just want a fresh perspective, let’s chat.

Book a 30-min slot: https://cal.com/rahulj/30min

Happy to share what I know, brainstorm together, or just listen.